The

word Energy comes from the Greek ένέργεια (energeia) which means activity,

operation, while the word Electricity, which also comes from Greek, ήλεκτρον (electron) means amber, because electrical effects were produced

classically by rubbing amber. Since the antiquity, man has been attracted by

the electricity phenomena, but it was in the 19th century that it turned from a

scientific curiosity into an essential tool for modern life. Electricity was in

fact a major driving force of the Second Industrial Revolution, that is to say,

an important technology that contributed to mass production activity,

operation.

Electricity was then deployed over the territory of the industrialized nations, and the grid grew to accommodate its increasing demand, turning up to become a commodity. However, this growth was based on the business model introduced by Thomas Edison, in which utilities owned the plants that generated electricity, the transmission lines that carried it to substations, and the wires that distributed it to customers. Despite of the waves of liberalization that started in the 1980s, the grids didn’t improve their expanding capability, and we have seen some serious blackouts affecting millions of people, such as the 2003 Northeast Blackout in the United States of America (USA), and the 2003 Italy Blackout – both caused by lack of reliability and ageing of the grid. It is also a fact that during the first half of the 20th century, utilities had unlimited access to cheap fossil fuels, and had no incentive to upgrade their inefficient old plants. From the recent decades, we have been assisting to a rise in consumption of energy from the so-called BRICS[1] countries, and we can expect that consumption of electricity will further rise, and with that, the concern on the fossil fuels, and their impact on the environment. With this, environmental concerns are also playing a very important part on energy planning. One key measure identified to tackle the transition to sustainable and low-carbon industry is the expansion of Renewable Energy Sources (RES), and more than that, its integration into the electricity grid. Besides the environmental effects, what is intended is to help solving the limitations of storage capacities, and at the same time, being economically efficient.

Thus, the electric grid needs to accommodate the changes to meet challenges of improved load control and increased generation from renewables. There are two views on how to achieve those goals: one is the adaptation of the current grid by means of conventional “Dumb Grid”[2] so that it integrates a high share of RES, and the other view is to get there by means of a more automated and integrated grid that brings intelligence using information and communication technologies, and metering from generation to all the final consumers. As for the second view, we are talking about Smart Grid (SG), an electrical grid that incorporates Information and Communications Technology (ICT) and Smart Meters (SM). The drivers for SG are several and different, depending on the geopolitical domain we consider. For example, main drivers for SG in USA are the ageing and security of the grid, while the EU seems to be more motivated by the 20-20-20 objectives[3]. The SG is seen as a means (not an end) of achieving the goals of a global energy challenge that countries face in the next years. Wherever these changes are going to take place, its development will be part of a major change to the way electricity is generated, transmitted, distributed and used, and like any substantial change in national infrastructures, the costs will be challenging.

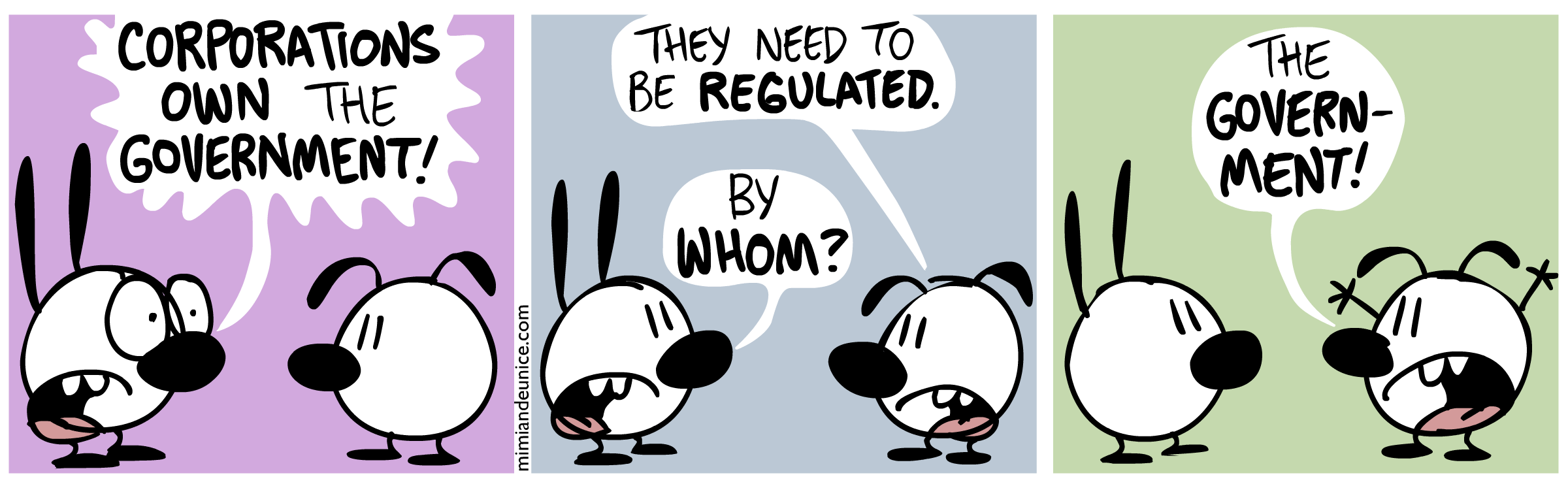

However, technology alone won't fix all the concerns about the grid, as they are not mainly a technological problem – instead, it relates to a whole system, where political gridlock, inefficient markets, and shortsighted planning that have created those bottlenecks that cannot be solved solely with millions of Smart Meters and ICT infrastructure. Without further policy stability, appropriate regulatory incentives, and more investment, may result in not realizing the Smart Grid, and consequently falling behind in what can be a new global growth market and a source of prosperity and jobs for years to come.

Electricity was then deployed over the territory of the industrialized nations, and the grid grew to accommodate its increasing demand, turning up to become a commodity. However, this growth was based on the business model introduced by Thomas Edison, in which utilities owned the plants that generated electricity, the transmission lines that carried it to substations, and the wires that distributed it to customers. Despite of the waves of liberalization that started in the 1980s, the grids didn’t improve their expanding capability, and we have seen some serious blackouts affecting millions of people, such as the 2003 Northeast Blackout in the United States of America (USA), and the 2003 Italy Blackout – both caused by lack of reliability and ageing of the grid. It is also a fact that during the first half of the 20th century, utilities had unlimited access to cheap fossil fuels, and had no incentive to upgrade their inefficient old plants. From the recent decades, we have been assisting to a rise in consumption of energy from the so-called BRICS[1] countries, and we can expect that consumption of electricity will further rise, and with that, the concern on the fossil fuels, and their impact on the environment. With this, environmental concerns are also playing a very important part on energy planning. One key measure identified to tackle the transition to sustainable and low-carbon industry is the expansion of Renewable Energy Sources (RES), and more than that, its integration into the electricity grid. Besides the environmental effects, what is intended is to help solving the limitations of storage capacities, and at the same time, being economically efficient.

Thus, the electric grid needs to accommodate the changes to meet challenges of improved load control and increased generation from renewables. There are two views on how to achieve those goals: one is the adaptation of the current grid by means of conventional “Dumb Grid”[2] so that it integrates a high share of RES, and the other view is to get there by means of a more automated and integrated grid that brings intelligence using information and communication technologies, and metering from generation to all the final consumers. As for the second view, we are talking about Smart Grid (SG), an electrical grid that incorporates Information and Communications Technology (ICT) and Smart Meters (SM). The drivers for SG are several and different, depending on the geopolitical domain we consider. For example, main drivers for SG in USA are the ageing and security of the grid, while the EU seems to be more motivated by the 20-20-20 objectives[3]. The SG is seen as a means (not an end) of achieving the goals of a global energy challenge that countries face in the next years. Wherever these changes are going to take place, its development will be part of a major change to the way electricity is generated, transmitted, distributed and used, and like any substantial change in national infrastructures, the costs will be challenging.

However, technology alone won't fix all the concerns about the grid, as they are not mainly a technological problem – instead, it relates to a whole system, where political gridlock, inefficient markets, and shortsighted planning that have created those bottlenecks that cannot be solved solely with millions of Smart Meters and ICT infrastructure. Without further policy stability, appropriate regulatory incentives, and more investment, may result in not realizing the Smart Grid, and consequently falling behind in what can be a new global growth market and a source of prosperity and jobs for years to come.

[1] BRICS is the title of an association of leading emerging economies,

arising out of the inclusion of South Africa into the BRIC group in 2010. As of

2012, the group's five members are Brazil, Russia, India, China and South

Africa. With the possible exception of Russia, the BRICS members are all

developing or newly industrialized countries, but they are distinguished by

their large, fast-growing economies and significant influence on regional and

global affairs. (Source: http://en.wikipedia.org/wiki/BRICS )

[2] “Dumb grid” is a term used to refer to the traditional grid, which

is seen as being based on limited information and leaving no real control for

consumers. A Dumb grid demands a large amount of physical infrastructure,

practically meaning more cables needed to be laid, because it will not be able

to rely on smart distribution of intermittent energy through ICT and therewith

compensate peaks in supply or demand.

[3] The climate and energy package is a set of binding legislation

which aims to ensure the European Union meets its ambitious climate and energy

targets for 2020. These targets, known as the "20-20-20" targets, set

three key objectives for 2020: A 20% reduction in EU greenhouse gas emissions

from 1990 levels; raising the share of EU energy consumption produced from

renewable resources to 20%; A 20% improvement in the EU's energy efficiency.(Source:

http://ec.europa.eu/clima/policies/package/index_en.htm )